Good Research vs Bad Research

First things first: at Xmark, we really like good academic research. Good Virtual Reality research can move the whole field forward. It counter-acts misinformation. It can also lead to exciting new products and techniques for solving all kinds of problems.

The Good

Here are some examples of things we like:

- USC’s Institute for Creative Technologies have done all kinds of amazing work. We’re particularly fond of their work on redirected walking.

- It probably goes without saying that teams at MIT are all over Virtual Reality and related areas.

- Our friends at Brown University’s Center for Computation and Visualization are also doing some very interesting work.

And of course, there are thousands more research projects happening all around the world.

The Bad

This week saw the release of exactly the kind of academic research we really struggle with. It was picked up by multiple outlets.

The headline below is from PsyPost, “a psychology and neuroscience news website dedicated to reporting the latest research on human behavior, cognition, and society.”. As the PsyPost site says, “We are only interested in accurately reporting research about how humans think and behave. And we only report on research that has been published in legitimate, peer-reviewed scientific journals.”.

To be clear, I have no reason to doubt PsyPost’s integrity. The headline is pulled directly from the research paper.

And here it is, in all its mis-informed glory:

People wearing virtual reality headsets have worse balance and increased mental exertion

New research published in PLOS One has found that virtual reality use impairs physical and cognitive performance while trying to balance.

The Damned Ugly

As a VR practitioner, this is a scary headline. PLOS One is a high-quality, peer-reviewed journal and well respected. Worse balance and increased mental exertion are both strong negatives for VR users, especially for mass market adoption.

If this research was true, it is potentially a major roadblock to the mass adoption of Virtual Reality.

But here’s the thing. We believe this research is fundamentally flawed. Let me explain.

About the Study

The research was conducted at the University of Michigan, Ann Arbor by Steven M. Peterson , Emily Furuichi and Daniel P. Ferris.

Here’s the abstract:

Virtual reality has been increasingly used in research on balance rehabilitation because it provides robust and novel sensory experiences in controlled environments. We studied 19 healthy young subjects performing a balance beam walking task in two virtual reality conditions and with unaltered view (15 minutes each) to determine if virtual reality high heights exposure induced stress. W e recorded number of steps off the beam, heart rate, electrodermal activity, response time to an auditory cue, and high-density electroencephalography (EEG). We hypothesized that virtual high heights exposure would increase measures of physiological stress compared to unaltered viewing at low heights…

Our findings indicate that virtual reality provides realistic experiences that can induce physiological stress in humans during dynamic balance tasks, but virtual reality use impairs physical and cognitive performance during balance.

This starts well. Can VR be used to induce stress? It’s a great question. Anecdotally, I can say yes. But anecdotes are not research, so it’s great to see this being rigorously explored.

I take no issue at all with the first half of their concluding sentence:

Our findings indicate that virtual reality provides realistic experiences that can induce physiological stress in humans during dynamic balance tasks,

But the second half is really problematic:

but virtual reality use impairs physical and cognitive performance during balance.

Again, if this were true, it would have a significant and negative impact on the entire VR industry. So let’s dig in.

Where’s The Problem?

Great question. The sample size isn’t great (19 people), but that’s not my concern. The real problems are two practical issues that the authors seem to largely ignore, and an incredibly out-dated experimental setup.

Here’s their description of the toolset:

The virtual environment was rendered using Unity 5 software (Unity Technologies, San Francisco, CA) and included a virtual avatar controlled by the Microsoft Kinect. This computer used a NVIDIA Titan X graphics card (NVIDIA, Santa Clara, CA) to avoid slow-downs in the virtual reality presentation. Because humans more reliably perceive heights when they have a body in virtual reality, each subject had a virtual avatar. This avatar mimicked the subject’s movements in the virtual environment, using the Kinect tracking with the ‘Kinect v2 Examples with MS-SDK’ Unity package. We did not have the Kinect control the avatar’s arms, hands, and toes because the Kinect could not reliably track them during the experiment. Because the Kinect can only reliably track a user that faces it, subjects made beam passes in one direction and walked over-ground in the other direction. Each condition ended after 15 minutes of forward beam passes. We choose a fixed time instead of a fixed number of passes so that subjects were not encouraged to walk faster.

Or in other words, they are using a Kinect to track the user’s movements and render an avatar in the virtual environment.

At this point, the more experienced VR practitioners among you already have questions.

Big Question #1: What about LAG?

The Kinect sensor is a great piece of hardware, but it is also notorious for issues with lag. Don’t take my word for it, here’s an article from AnandTech that concludes:

At the end of the day, I measured between 8-10 frames of input lag, which at 29.96 FPS works out to 267 ms of input lag…

267ms may not sound like a lot, but in VR, it is a huge amount of lag. What’s more, the Kinect only runs at ~30 frames per second.

By comparison, tracking in an HTC Vive runs at 90 frames per second, with typically 12ms or less of lag. In other words, the Kinect is running 22+ times slower than a modern VR system.

If you are using the avatar to provide realtime feedback on your position and balance, it would seem likely that a 22x greater lag would be a problem.

Big Question #2: What about the lack of arms/hands/toes?

We can ignore the lack of toes. Out of sight, out of mind. Move along. But we can’t ignore arms and hands. Not tracking arms and hands while tracking body movement (and feeding it back to an avatar you can see) is very problematic.

If you’ve ever had the “joy” of working with a poorly calibrated Motion Capture system, you’ll have some insight into the underlying issue here.

I’ve spent many hours working with MoCap. There is nothing more disconcerting than seeing your limbs dance off at odd angles, fall from your body, or suddenly stop moving.

Of course, this is anecdotal data, not rigorous research. However, the idea that a moving torso with frozen arms would not cause some kind of cognitive dissonance is absurd.

And guess what? Cognitive dissonance causes cognitive load. Duh.

Big Question #3: The Biggest Issue…

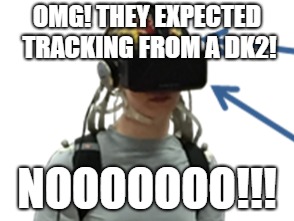

Did you see the image at the top of this post? For the eagle-eyed among you (or the people that read the text on the image), I can confirm that the user is wearing an Oculus DK2. Why did I use that image? It’s from the research paper.

Yes. This leading edge VR research from 2018 was conducted using an Oculus DK2. A pre-release dev kit launched in July 2014 was used as the foundational piece of VR equipment in this experiment.

Old & Busted vs New Hotness

This is like driving this car:

and expecting performance like this:

The DK2 is far inferior to the current Oculus Rift and HTC Vive in every possible metric. Don’t take my word for it. Here’s a fairly unbiased comparison from a Redditor with 2 years of experience using the DK2.

In particular, pay attention to this paragraph:

Tracking: Superior to DK2. Range and accuracy much improved. And of course you can fully turn around. None of the wobble and loss of tracking that regularly occurred with DK2.

Positional tracking on the DK2 was notoriously bad. I had one. It was unusable. Rotational tracking was fine, but positional tracking barely worked and was incredibly laggy.

And again, if you introduce lag and poor tracking, of course you increase disorientation and cognitive load. The brains of the poor volunteers were trying very hard to compensate for a truly shitty VR experience.

In Conclusion

I have some thoughts.

- The conclusions of this study regarding physical and cognitive performance are clearly unreliable:

- There was no attempt to address the significant lag inherent in the system.

- There are significant visual disconnects between what the user experienced and what they could see. This breaks a fundamental rule in developing any good VR experience.

- The team relied on hardware that has well known and well documented technical limitations that would directly impact immersion and cognitive load.

- The research team clearly did not work with any experienced VR practitioners. I cannot imagine any of the people I know that work in VR signing off on the experimental setup as presented.

- This is a potentially industry-damaging and sensationalist headline based on a very weak experimental design and fundamentally flawed hardware.

Are the Authors Unaware of these issues?

The authors do seem to have some awareness that maybe there are some issues here. Buried towards the end of the paper, you find the following:

The latency of the headset was 60 frames per second, but the movement generated by the Kinect was approximately 30 frames per second, which was likely noticeable to the user. In addition, the Kinect may not have provided ideal body tracking, which could break a subject’s sense of presence in virtual reality. Such breaks in presence can alter cognitive processing [84] and may have affected how realistic the virtual reality high heights experience felt to each subject.

But then they go on to reassert the clearly hard-to-substantiate claim that:

However, virtual reality can still impair stability even when controlling for latency and field of view … This experiment establishes useful measures for assessing future virtual reality headsets in a dynamic setting.

So if they have any awareness of the flaws in their research, they are happy to bury them.

Damn, Daniel!

This experiment does not, in any way, establish “useful measures for assessing future virtual reality headsets in a dynamic setting.”.

If you use a blunt and rusty knife to do surgery, you won’t get useful outcomes.

If you perform a study to explore image quality in online video, but use a standard definition TV from the 1980s as your viewing platform, your results would be garbage.

And guess what? If you use a 4+ year old VR headset with notoriously bad tracking to test how much disorientation a user experiences, your results will be garbage.

Okay, maybe garbage seems harsh. But in your conclusion you make a broad and sweeping statement that is almost certainly caused by the experimental setup you designed.

That’s just bad science.

One More Thing

As a founding member of RIVR (Rhode Island VR), we operate with an open door policy. Anyone interested in VR (or AR) can schedule a visit and ask questions. In the past two years, we’ve welcomed guests from a broad range of academia and industry.

If you, or someone you know, is conducting VR research. Please, before you go too far in your research, reach out to your local practitioners and get a reality check. Do not waste your time and ours with well intentioned research that is built on a fundamentally flawed approach. It does neither of us any favors.